LLM Seeding: An AI Search Strategy to Get Mentioned and Cited

Winning on Google no longer means winning the internet. Your SEO might be perfect, but ChatGPT is still ignoring you.

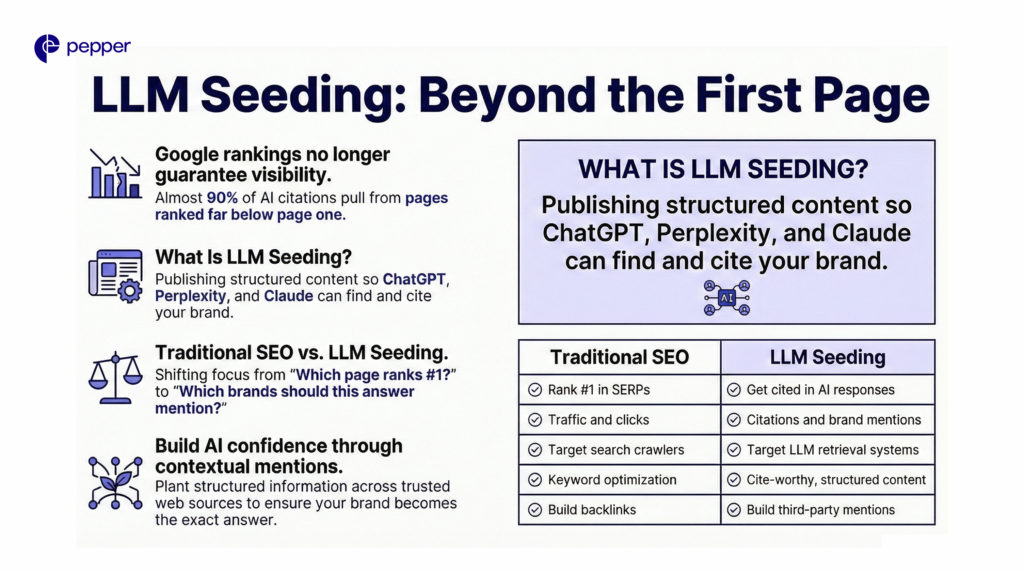

The rules of search have changed. Almost 90% of AI citations pull from pages ranked far below page one.

Your buyers are evolving fast. Most B2B prospects now start their journey with an AI prompt rather than a traditional search engine.

LLM seeding bridges this gap. It ensures your brand becomes the exact answer your audience sees.

| The AI Citation Cheat Sheet 1. Ranking #1 doesn’t guarantee AI mentions—nearly 90% of citations come from lower-ranked pages. 2. LLM seeding focuses on brand mentions, not just traffic and clicks. 3. Third-party validation (Reddit, reviews, publications) drives citation trust. 4. Structure matters: question-based headings, 120–180 word sections, and strong data increase extractability. 5. Measure AI share-of-voice across platforms, not just SEO rankings. |

What Is LLM Seeding?

LLM seeding is the practice of publishing and distributing content so that large language models, such as ChatGPT, Perplexity, Google AI Overviews, and Claude, can easily find, understand, and cite your brand when answering questions.

The term “seeding” describes exactly how it works: You plant structured information about your brand across multiple trusted sources on the web. Over time, as AI models encounter your brand repeatedly in similar contexts, they develop confidence in citing you.

| Remember: LLM seeding adds a parallel strategy for the growing share of buyers who research on AI platforms before ever reaching Google. |

Here’s the fundamental shift: Traditional SEO optimizes for “Which page should rank #1?” LLM seeding optimizes for “Which brands should this answer mention?”

| Traditional SEO | LLM Seeding |

|---|---|

| Rank #1 in SERPs | Get cited in AI responses |

| Traffic and clicks | Citations and brand mentions |

| Target search crawlers | Target LLM retrieval systems |

| Keyword optimization | Cite-worthy, structured content |

| Build backlinks | Build third-party mentions |

How LLMs Retrieve and Cite Content

When you ask ChatGPT a question, it doesn’t search like Google. It uses retrieval-augmented generation (RAG)—pulling from pre-trained data and real-time web searches to synthesize answers from multiple sources.

LLMs scrape the web to form massive datasets. The C4 Common Crawl, for example, contained 750GB of web text from high-authority publications, niche sites, and user-generated content. AI models then weight sources by trustworthiness, recency, and relevance.

This is why Reddit leads citation frequency at 40.1%, followed by Wikipedia at 26.3%. AI models crave human consensus—forums, reviews, and third-party validation carry more weight than branded content.

How to Implement LLM Seeding

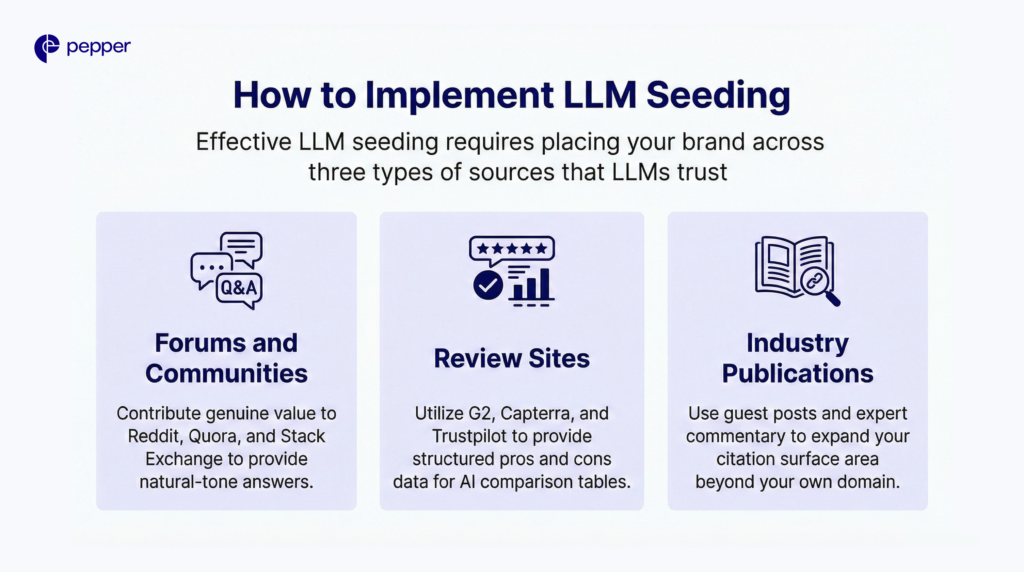

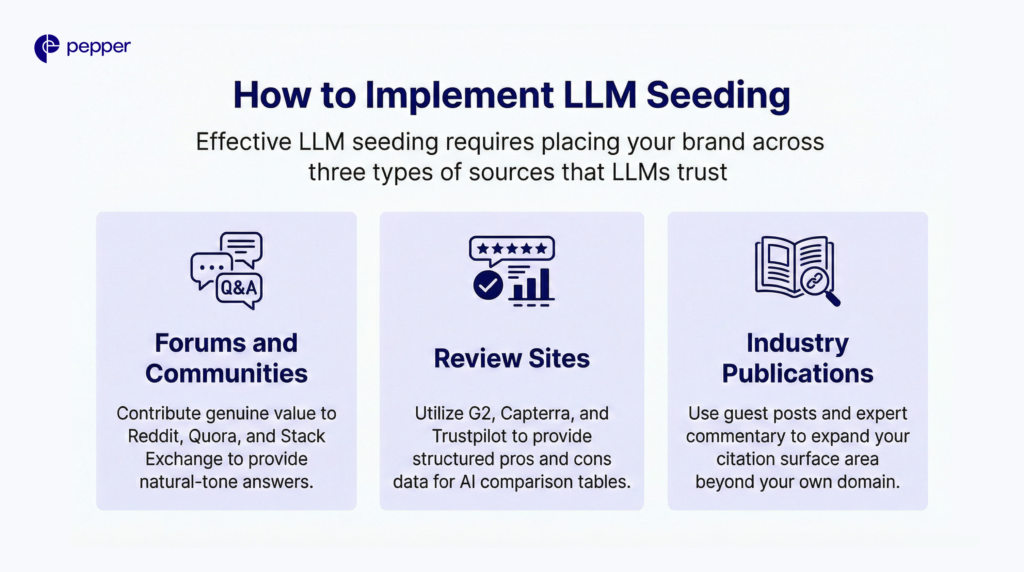

Effective LLM seeding requires placing your brand across three types of sources that LLMs trust.

Seed High-Authority Third-Party Platforms

AI models heavily weight user-generated content because it represents real human answers. Your priority platforms:

- Forums and Communities: Reddit, Quora, and Stack Exchange are goldmines for training data. Contribute genuine value—AI prioritizes “helpfulness” over popularity. Models seek clear, direct answers in a natural tone, not posts optimized for upvotes.

- Review Sites: G2, Capterra, and Trustpilot provide structured pros/cons data that LLMs use to build comparison tables. Encourage detailed customer reviews that mention specific use cases and outcomes.

- Industry Publications: Guest posts, expert commentary, and contributed articles in trusted publications expand your citation surface area beyond your own domain.

Structure Content for Citation

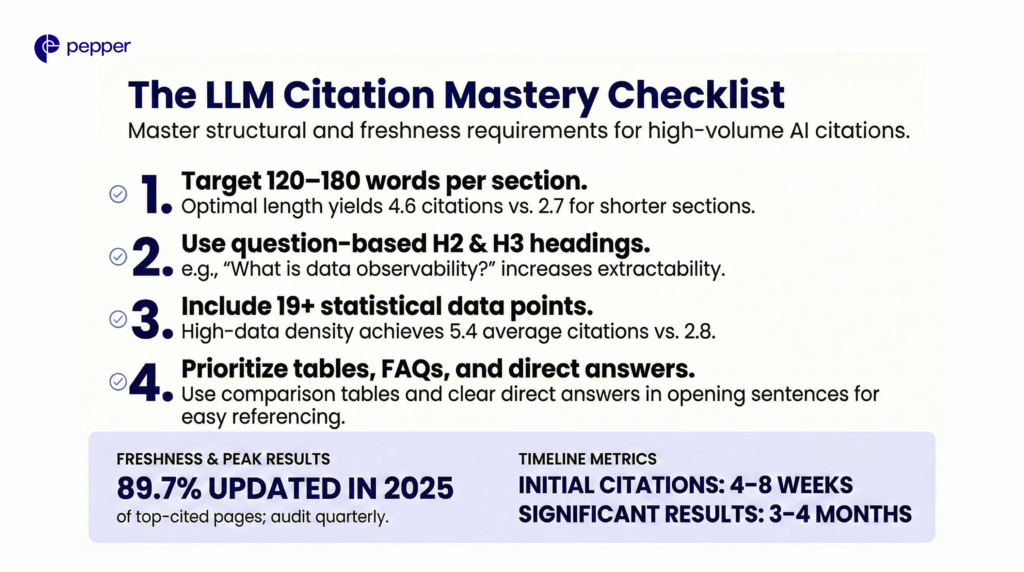

LLMs extract content differently from search engines. Research shows pages with section lengths of 120-180 words between headings perform best, averaging 4.6 citations compared to 2.7 citations for sections under 50 words.

Start with a clear question-answer format. A heading like “What is data observability” is stronger than “Understanding observability”—literal phrasing increases extractability.

Content with 19 or more statistical data points averages 5.4 citations, compared to 2.8 for pages with minimal data. Include quotable sections, comparison tables, and FAQ formats that LLMs can directly reference.

| ✓ Content Structure Checklist Section lengths between 120 and 180 words Question-based H2 and H3 headings Statistical data points (19+ for maximum citations) Comparison tables for product/concept differentiation FAQ sections matching common user queries Clear, direct answers in the opening sentences of each section |

Maintain Content Freshness

Among ChatGPT’s top 1,000 cited pages, 89.7% were updated in 2025. LLMs favor recent content because training data has cutoff dates, and real-time retrieval prioritizes freshness.

Audit your highest-value content quarterly. Update statistics, add recent examples, and refresh publication dates. This signals to LLMs that your content reflects current reality.

Most organizations see initial AI citations within 4-8 weeks of implementing comprehensive LLM seeding strategies, with significant results emerging after 3-4 months of consistent effort.

| Remember: LLM seeding success requires spreading your brand across the third-party sources AI trusts most—Reddit, review sites, and publications—while structuring content for easy extraction and citation. |

How to Measure LLM Seeding Success

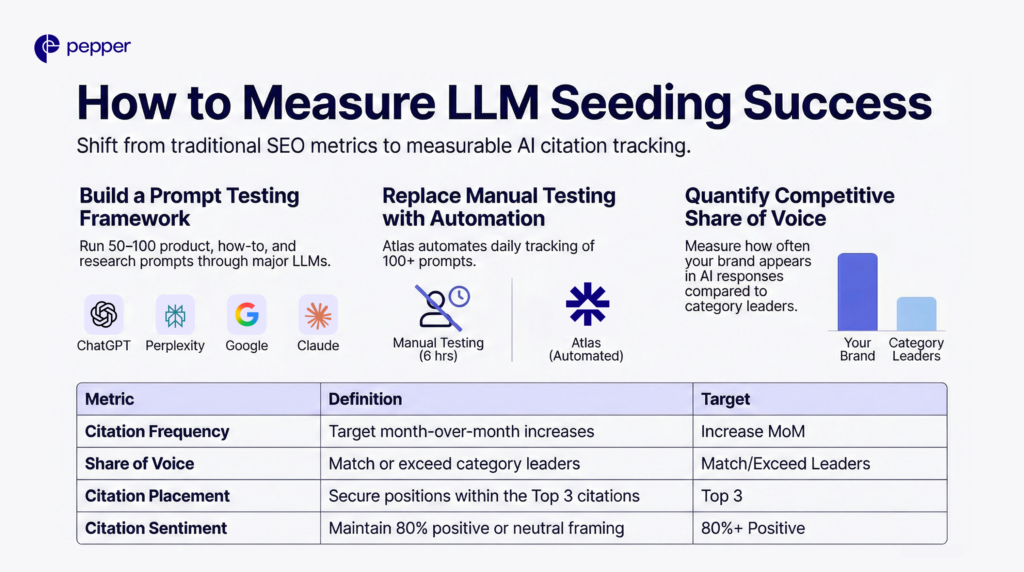

Without visibility into AI search results, you’re optimizing blind. Traditional SEO metrics, such as rankings, CTR, and page speed, don’t predict AI citation performance.

Track Brand Presence Across LLMs

Create 50-100 prompts matching buyer intent: product comparisons, how-to queries, and research questions. Run each through ChatGPT, Perplexity, Google AI Overviews, and Claude. Document where your brand appears, citation placement, and sentiment.

Manual testing takes 4-6 hours for 50 prompts. Atlas—Pepper’s intelligence layer—automates this process, tracking brand presence across LLM platforms daily and showing competitive share-of-voice. The platform monitors 100+ category prompts automatically, providing baseline metrics that turn LLM seeding from guesswork into a measurable strategy.

Measure Share of Voice

Compare your citation frequency against competitors. If competitors appear in 8 of 10 top category queries while you appear in 2, you’ve quantified the visibility gap.

| Metric | What It Measures | Target |

|---|---|---|

| Citation Frequency | How often the brand appears in AI responses | Increase month-over-month |

| Share of Voice | Brand citations vs. competitors | Match or exceed category leaders |

| Citation Placement | Position within AI response | Top 3 citations preferred |

| Citation Sentiment | Positive/neutral/negative framing | 80%+ positive or neutral |

What’s the Difference Between LLM Seeding and GEO?

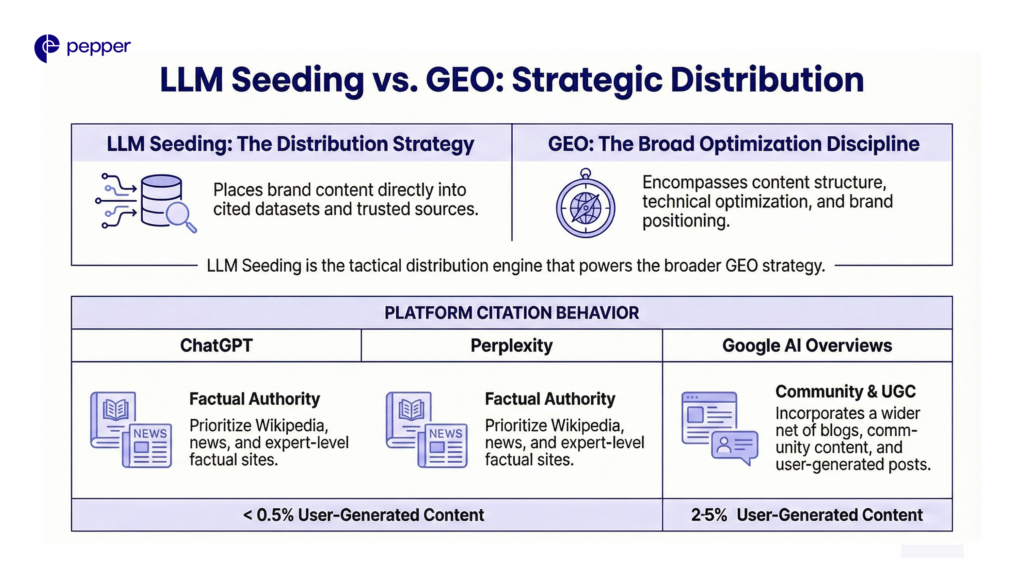

LLM seeding is a core input to GEO. Generative Engine Optimization is the broader discipline of optimizing for AI-generated answers, encompassing content structure, technical optimization, and brand positioning.

LLM seeding focuses specifically on placing your brand into the datasets and sources that LLMs cite. Think of it as the distribution strategy within your larger GEO framework.

Adding relevant statistics, quotations, and citations can boost content visibility in generative engines. LLM seeding provides the distribution layer that ensures optimized content reaches the sources AI models trust.

| Quick Tip: LLM seeding and GEO work together. Seeding handles distribution across trusted sources; GEO ensures your content is structured for maximum citation when LLMs retrieve it. |

Platform-Specific Considerations

Different AI platforms cite different source types. ChatGPT and Perplexity lean toward high-authority factual sources—Wikipedia, news, and expert sites. Google’s AI Overviews cast a wider net, heavily incorporating blogs, community content, and UGC.

ChatGPT avoids user-generated content almost entirely (<0.5% of citations), while Google’s AI Mode uses it heavily (2-5%). Your seeding strategy should account for which platforms your buyers use most.

Own Your Brand’s Presence in AI Search

Atlas automates visibility tracking across LLM platforms, showing competitive share-of-voice and flagging optimization opportunities. Combined with strategic content distribution across Reddit, review sites, and publications, you’ll build the citation presence that converts AI search traffic into a pipeline.

Book a demo to see your current AI visibility baseline.

Frequently Asked Questions

How long does LLM seeding take to show results?

Most organizations see initial AI citations within 4-8 weeks of implementing comprehensive strategies. Significant share-of-voice improvements typically emerge after 3-4 months of consistent effort across multiple trusted platforms.

Does LLM seeding replace traditional SEO?

No—LLM seeding complements SEO. Strong search rankings still matter, but they don’t guarantee AI citations. Nearly 90% of ChatGPT citations come from URLs ranked position 21 or lower, so you need both strategies working together.

Which platforms matter most for LLM seeding?

Reddit leads with 40.1% citation frequency, followed by Wikipedia at 26.3%. Review sites like G2 and Capterra also rank highly because they provide structured comparison data LLMs use to build recommendations.

How do I measure LLM seeding success?

Track citation frequency, share of voice against competitors, citation placement within AI responses, and sentiment. Manual tracking requires testing 50+ prompts across multiple LLMs; automation tools like Atlas streamline ongoing measurement.

Can smaller brands compete with larger ones through LLM seeding?

Yes. Brands like Adored Beast appear alongside Purina and Zesty Paws in ChatGPT pet product recommendations despite much smaller market share. Strategic third-party presence can level the playing field in AI search.

What content format gets cited most by LLMs?

Content with section lengths of 120-180 words between headings, question-based headers, and 19+ statistical data points performs best. FAQ formats and comparison tables also increase citation likelihood.

How is LLM seeding different from digital PR?

Digital PR focuses on brand awareness and backlinks through media coverage. LLM seeding specifically targets sources that AI models trust and cite—including UGC platforms, review sites, and community forums that traditional PR often overlooks.

Latest Blogs

Master the balance of primary and secondary keywords to win in the AI era. Learn how to optimize for LLMs, entity research, and search intent to get cited by AI.